Data Mining Project Helps Search Academic Papers Faster, More Efficiently

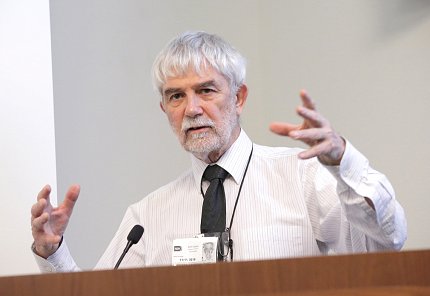

In 1982, European researchers published an article in the Annals of Virology. In it, they warned the African country Liberia to prepare for an Ebola epidemic, explained Dr. Peter Murray-Rust at a recent Frontiers in Data Science Lecture in Bldg. 35.

When the March 2014 outbreak occurred, public health officials in Liberia never saw the warning. The paper was behind a paywall.

“If you do not give knowledge to people, you will have bad medicine. And bad medicine means people die,” said Murray-Rust, reader emeritus in molecular informatics, University of Cambridge, and founder of ContentMine.org.

That’s why he founded ContentMine, an open-software data mining project that helps researchers search through academic research papers faster and more efficiently. “We want to liberate a huge of number of facts from the literature,” he said.

The software can search thousands of papers in just a few minutes. Previously, this task could take multiple high-quality researchers days or even weeks to complete manually.

The program finds, or crawls, scholarly articles on the web and then downloads, or scrapes, content from those articles onto a server. Then, the program mines the articles for relevant scientific data, which includes information such as abstracts, methods, conclusions, references and captions. The program can also extract data from diagrams, like phylogenetic trees, or chemical equations.

“One of the most important things in a paper is actually the captions,” Murray-Rust said. “If you look at the captions to the figures—without looking at the paper—you can probably tell what the paper is about because they have known phrases.”

If a user searches the term “Zika” for example, he or she will receive a list of articles that include that term. In addition, the program will include information from Wikidata—“an incredible source of additional information,” he noted. Wikidata contains critical numerical metadata such as someone’s age or a country’s population.

In practice, however, crawling, mining and scraping articles isn’t so simple, he noted. There are no standards that publishers follow. Each has its own way of doing things. Some, for example, upload papers onto their web sites into formats that are unreadable without special software.

Murray-Rust said one example is a PDF document, which does not contain machine-understandable words. The documents contain characters that cannot be read unless he creates software specifically to convert characters into HTML, a web code that a machine can read. What he does isn’t “rocket science,” rather “it’s just extremely tedious.”

Another obstacle is copyright law, which varies from country to country. In the United Kingdom, for example, Murray-Rust has the right to mine for “personal, non-commercial research.” However, it can be difficult to figure out what’s copyrighted and what isn’t.

“We can legally publish facts, although it’s not absolutely clear what fact is. But everyone agrees that facts are not copyrightable,” he quipped.

All of his software is open, meaning anyone can download and use it. “I’m delighted if people use what I’ve created,” he noted. A 15-year-old student from The Netherlands who is interested in conifers—Lars--helps him build the software.

“If you write open software and everything is open, people will take it and make new and exciting things,” Murray-Rust said.